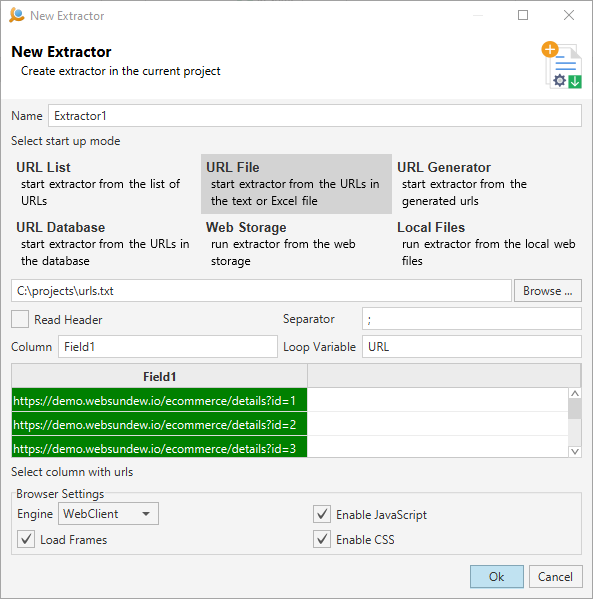

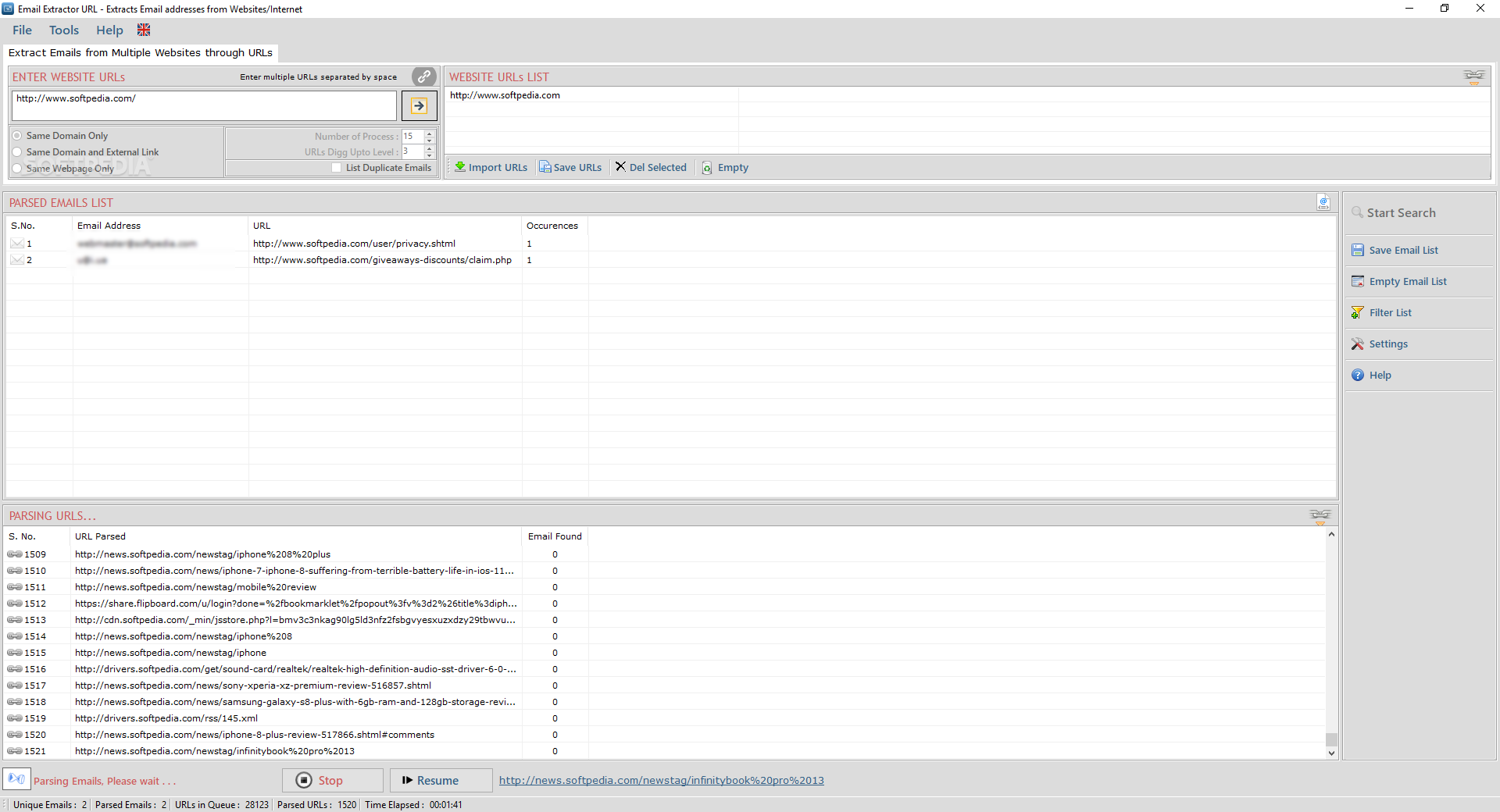

This supports use-cases such as news and media monitoring,Īnalytics, brand monitoring, mentions, sentiment analysisĪrticle Extraction which supports single article pages. Where extracted article URLs are sent to the article API,Īnd extracted pagination URLs are sent to the article list API. Usually each extracted article would contain a link,Īrticle list page type is especially useful for implementing crawling, Multiple posts, and other pages with multiple articles. Examples of such pagesĪre main or category pages of news sites, main pages of blogs showing Uses Resume, Auto Save, Versions, Full screen.Toggle table of contents sidebar Article List Extraction #Īrticle list extraction supports pages which contain multiple articles,.To authenticate with the Article Data Extractor API REST API, simply include your bearer token in the Authorization header. Uses a separate threading for extraction process and Web navigation, no freeze during extraction, even on heavy tasks! Data Extractor API Access Key & Authentication After signing up, every developer is assigned a personal API access key, a unique combination of letters and digits provided to access to our API endpoint.Uses the latest Cocoa multi-threading technology, no legacy code inside.Extracts Web address, FTP address, email address, feed, Telnet, local file URL, news.Google extraction from specific international Google sites with URL extraction more focused on individual country and language.Extracts on search engines starting from keywords and navigating in all the linked pages in an unlimited navigation from one page to the successive, all this just starting from a single keyword.Extracts directly from the Web cross navigating Web pages in background. This paper presents an extraction method of website information based on DOM to improve the searching efficiency, which only to preserve the theme information.

Extracts from multiple file inside a folder, to any level of nesting (also thousand and thousand of files).It can navigate for hours without user interaction in Web extraction mode, extracting all the URLs it finds in all the Web pages it surfs unattended or starting from a single search engine using keywords, looking in all the resulting and linked pages in an unlimited navigation and URL extraction. You can also specify a series of keywords then it searches Web pages related to the keywords via search engines, and starts a cross-navigation of the pages, collecting URLs. It allows the user to specify a list of Web pages used as navigation starting points and going to other Web pages using cross-navigation. Web scraping is a way to get data from a website by sending a query to the requested page, then combing through the HTML for specific items and organizing the. Or the extracted data can be saved on disk as text files ready to be used for the user purposes. And once done, it can save URL Extractor documents to disk, containing all the setting used for a particular folder or file or Web pages, ready to be reused. It can also extract from a single file or from all the content of a folder on your HD at any nested level. It can start from a single Web page and navigate all the links inside looking for emails or URLs to extract, and save all on the user HD. URL Extractor is a Cocoa application to extract email addresses and URLs from files, from the Web, and also looking via search engines. Article extraction supports pages which contain a single article, such as a news article, blog post, or another kind of an article.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed